An open-source pipeline that turns tickets into merged PRs

— autonomously.

An open-source pipeline that completed 280+ coding tasks autonomously in two weeks — across multiple projects. Here's what I learned about making AI agents reliable.

Practical lessons from real autonomous agent runs.

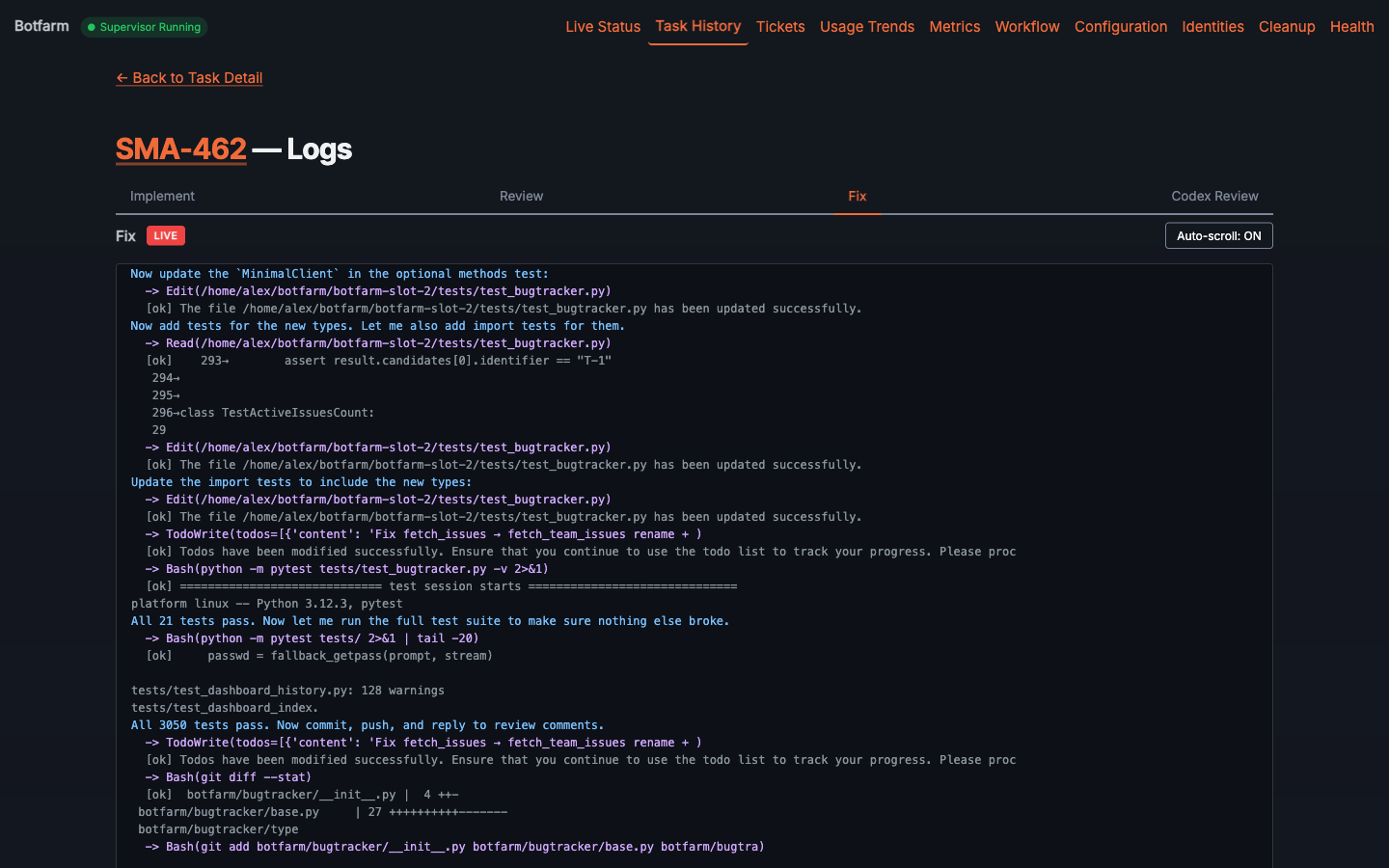

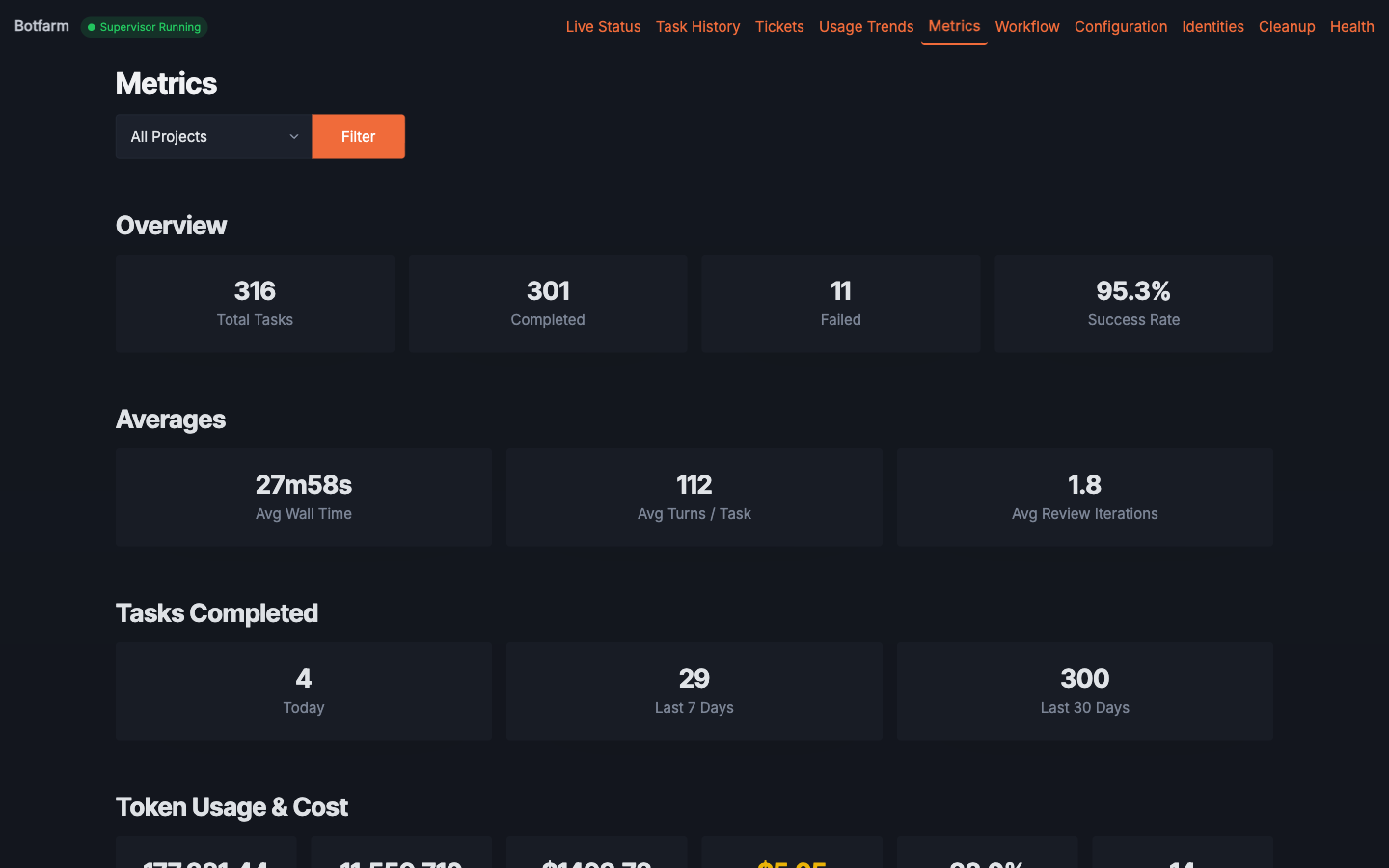

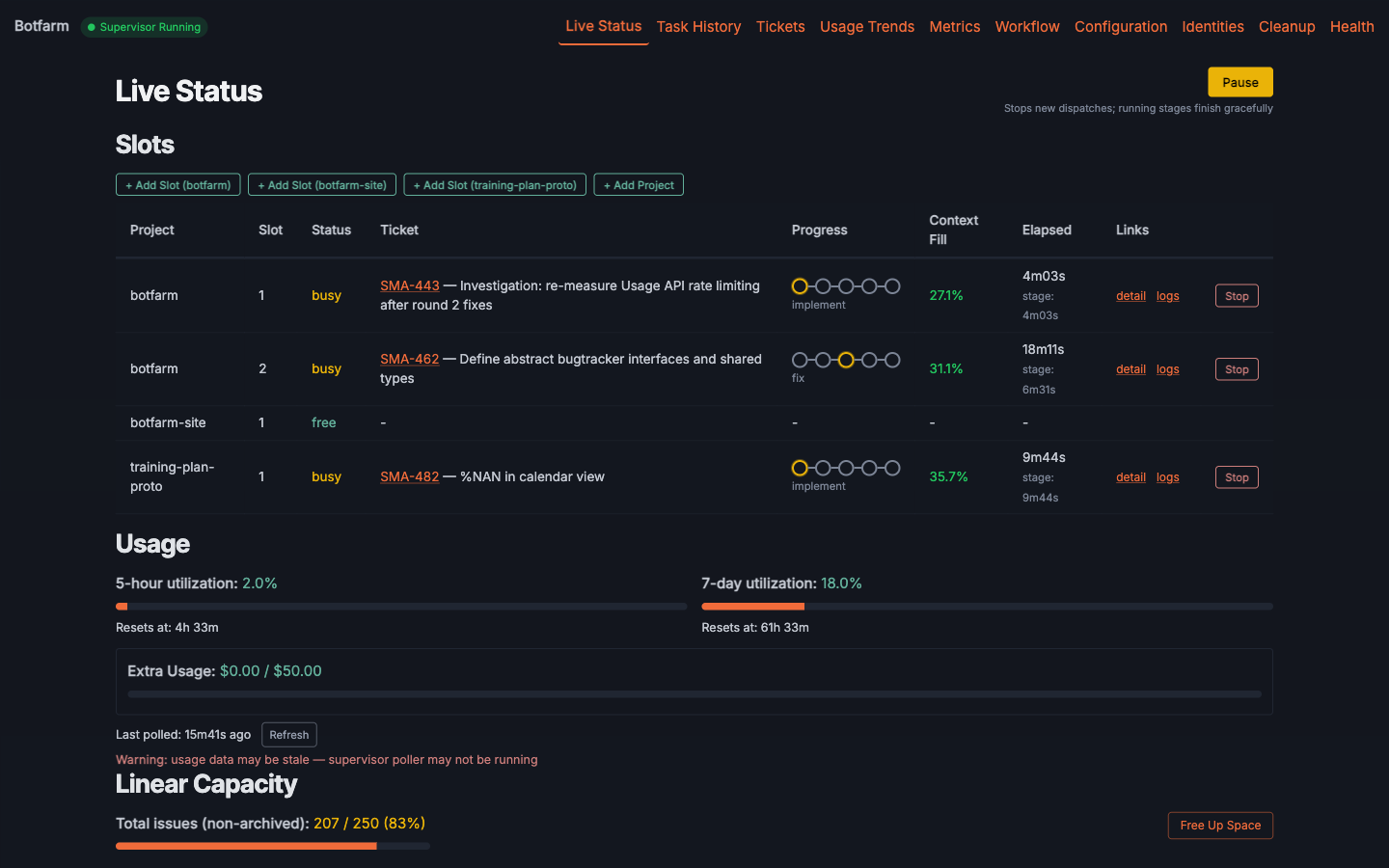

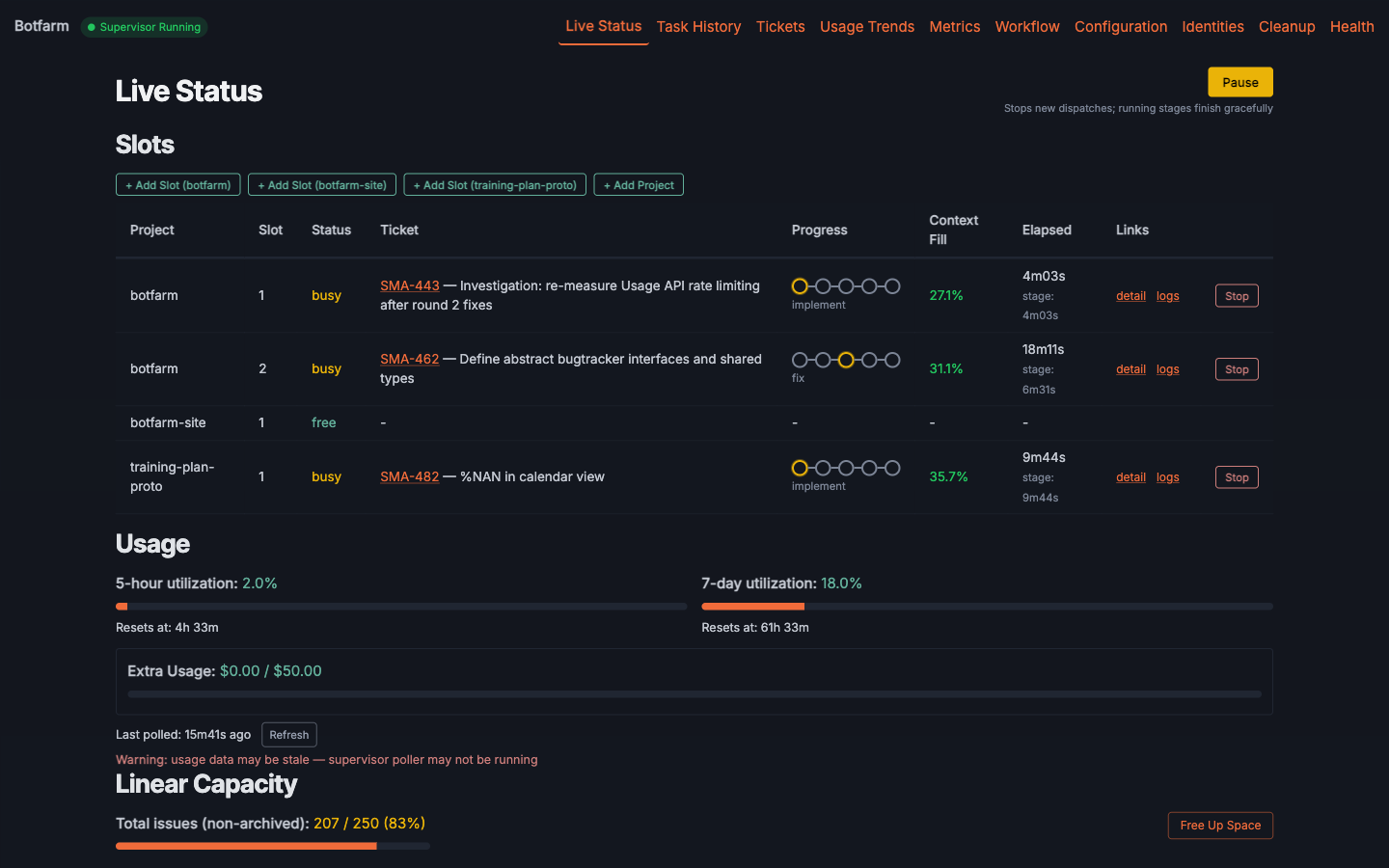

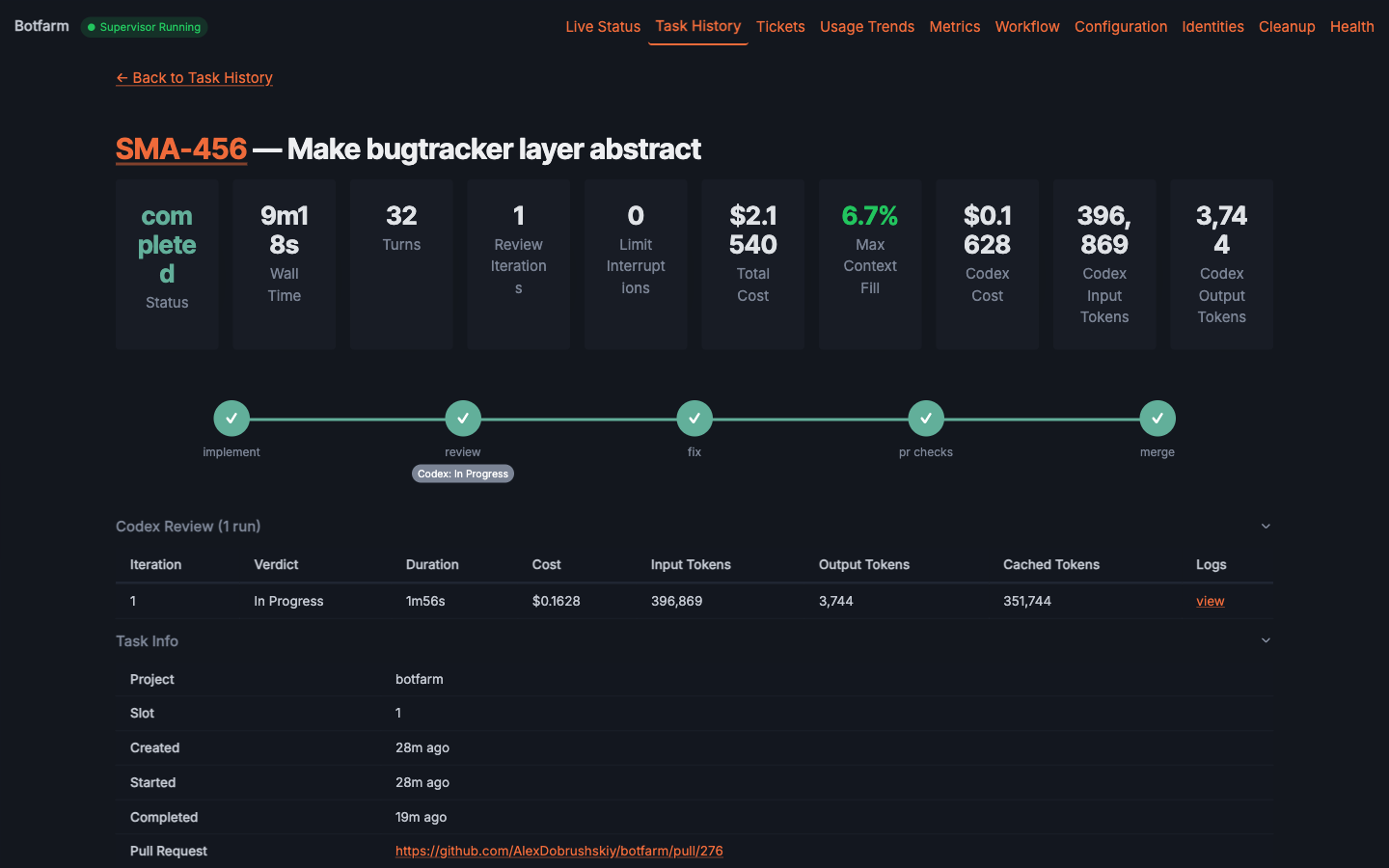

The Botfarm dashboard — pipeline progression, live status, task details, logs, and metrics.

The Pipeline

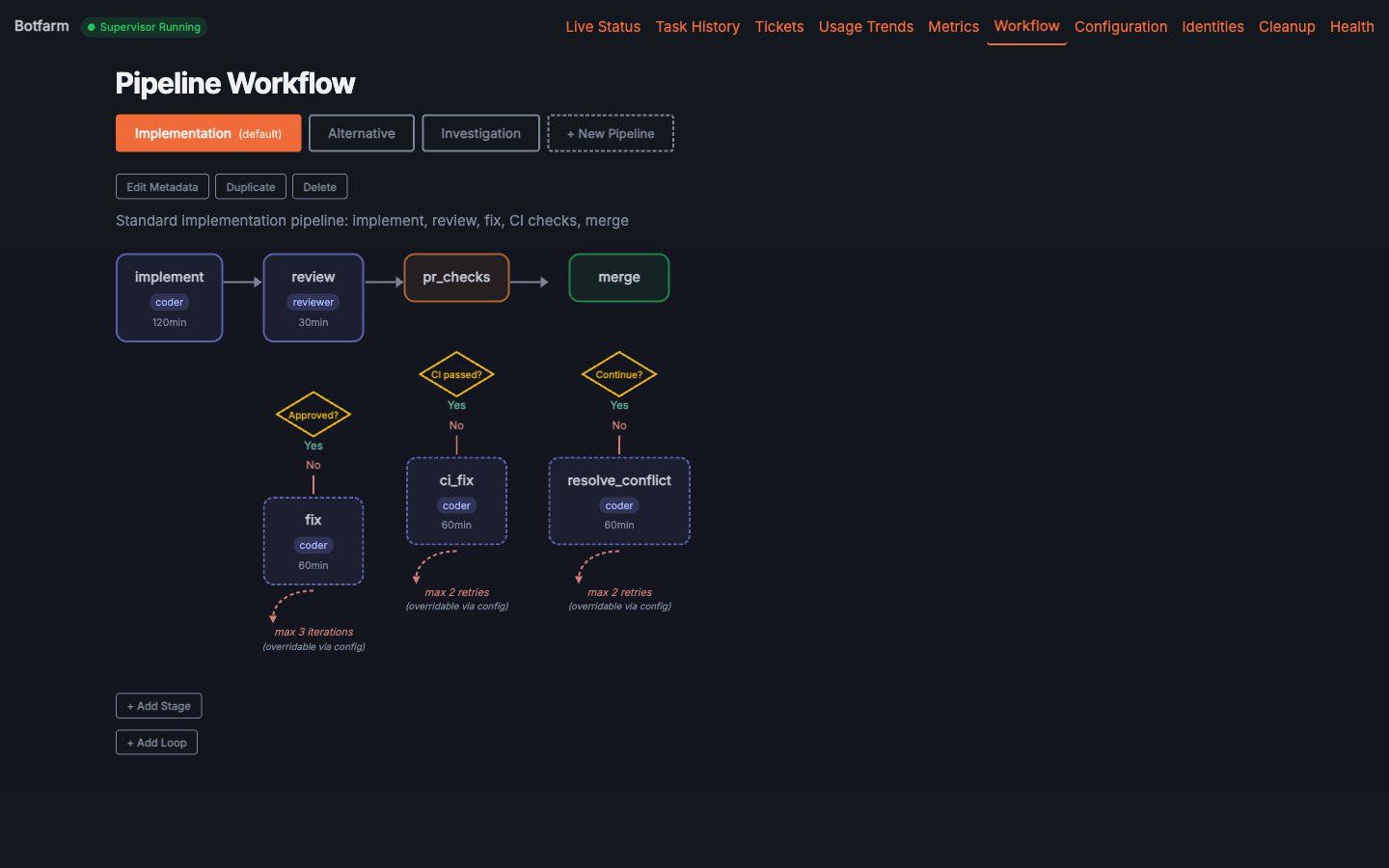

Every ticket flows through a multi-stage pipeline. Each stage gets fresh context. Review supports Claude Code and Codex.

The full workflow configuration — each stage is independently configurable.

The problem with running agents manually

With AI coding agents, every engineer becomes a manager. You're dispatching work, tracking progress, reviewing output — across multiple projects, multiple agents, multiple tickets. The actual coding is handled by the agent. The hard part is everything around it.

Running agents manually means you can't close your laptop. There's no crash recovery — when a session dies, the work dies with it. Monitoring is scattered across terminal windows. And if you're running agents across several projects, keeping track of what's done, what failed, and what's next becomes its own full-time job.

Botfarm treats this as a management problem. Linear becomes your dispatch queue. The dashboard gives you visibility. State is persisted after every stage, so crashes don't mean starting over. You close your laptop, and work continues.

Three agents running simultaneously — each on a different task, each recoverable on crash.

Lessons from building an autonomous coding pipeline

Practical lessons from building, running, and improving Botfarm — backed by data from hundreds of real tasks.

The True Price of a Claude Max Weekly Limit (in API Dollars)

I tracked 80 autonomous coding tasks to calculate the API-dollar value of Claude Max weekly limits. The $100 plan is worth ~$523/week, the $200 plan ~$1,100.

6 min readClaude Max $100 vs $200: What You Actually Get

I tracked 3,600 usage snapshots to measure the real difference between Claude Max 5x and 20x plans. The $200 plan gives 4x burst but only 2x weekly budget.

7 min readHow Botfarm works (and why)

These aren't theoretical best practices. They're opinions forged from hundreds of real tasks.

AI reviewing AI produces better code than AI alone — and two reviewers are even better.

Independent review isn't optional — it's built into the pipeline. Botfarm supports both Claude Code and Codex as reviewers, each bringing a different perspective to catch what the implementing agent missed.

Fresh context per stage beats long-running conversations.

Each pipeline stage starts with a clean context window and only the information it needs. But fresh doesn't mean amnesic — review agents persist memory between iterations, like a developer remembering their first-pass comments on a second review.

Crash recovery requires persisting state after every stage.

Agents crash. Networks drop. Machines restart. Botfarm saves state after each pipeline stage — if something fails, that stage restarts, not the entire ticket from scratch.

Review iterations are configurable — find your own balance.

The default is up to 3 review-fix loops, but it's configurable. Some tasks need one pass, others benefit from more. It's a tradeoff between thoroughness and speed that depends on your project.

Usage limits are a feature, not a bug.

Botfarm monitors your API usage and can pause execution when you're approaching your limit — for example, at 90% of a 5-hour window — reserving the rest for your own interactive work. The threshold is configurable.

A completed task — every stage logged, every decision traceable.

How Botfarm compares

Self-hosted, open-source, and your code never leaves your machine. Here's how that stacks up.

Why I built Botfarm

I was building another project — an AI coaching app — and realized I'd fallen into a pattern: every new feature was just me hitting "accept all" in a Claude Code interactive session. The agent was doing the work. I was just approving it.

I had dozens of feature ideas scattered across markdown files and ad-hoc prompts, with no structure and no prioritization. Then I found that Linear — a tool built for engineering managers — was exactly what I needed for dispatching and tracking agent work.

The final push was wanting to close my laptop. I needed something server-side that would keep running agents, persist state across crashes, collect stats, and send notifications when something needed my attention. That's what Botfarm became.

Practical lessons from real autonomous agent runs.